Possible Computing RET Projects and Themes

Research Project Overviews:

The Computing RET site will frame teacher centric projects via two research threads – (i) biologically and brain inspired computing models and (ii) physics-inspired computing models.

Thread 1:

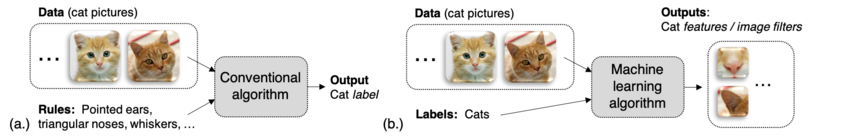

The first thread considers biologically (or brain)-inspired computing models such as machine learning (ML) algorithms [1] that are enjoying enormous success in language processing [2], medical imaging [3], etc. ML models and algorithms are fundamentally different from historical computing paradigms. A conventional algorithm/program is defined by input data and a set of rules on which data is evaluated. Rules generate an answer to a problem of interest based on the input. In a ML algorithm, data and the labels of said data (answers) are provided as inputs, and rules are learned that can be applied to new data [4]. Per Fig. 1, we may wish to know if an image contains a cat. With a conventional approach (Fig. 1a), we first develop rules that specify features associated with cats. With a ML approach, we begin with images that were known to be cats, and subsequently learn rules to identify pictures of unseen cats. Put another way, the filters shown in Fig. 1b could be applied over all parts of a new image to infer if an image contained many features associated with cats. As more features are found, the likelihood of an image containing a cat increases. If a user was then interested in identifying new animals in lieu of cats, he/she would not need to develop a new set of rules, but rather could use the same process to learn filters associated with a new animal.

Figure 1: (a) Conventional algorithm vs. (b) machine learning algorithm.

Hardware being developed by researchers at ND and elsewhere targets the computational needs of ML algorithms. Of great interest are hardware architectures comprised of new technologies [5-8] that are (i) compatible with existing MOSFET technology and (ii) enable merged logic and memory fabrics where logic and the memory that stores data for said logic are integrated at finer granularities, and not on separate chips [9-14]. These fabrics can support an important computation in ML algorithms such as multiply-accumulate (MAC) operations (y=wixi – where xi represents an input pixel value of a cat image per Fig.1 , and wi represents a learned filter weight; higher values are indicative of a feature being present). Per [15], a single MAC operation requires ~3.2 pJ of energy. However, if a weight value wi is stored in off-chip memory and brought to the processor for computation, 640 pJ of energy are required just to fetch the filter value! The energy of the memory request overwhelms that of the computation. Co-locating more weight data with logic for ML computations could enable more sophisticated algorithms in battery-powered edge devices in the internet of things (IoT).

Thread 2:

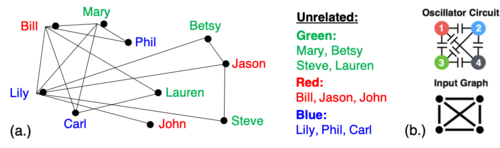

The second thread considers computational models inspired by physics. The application of the von Neumann computing model to problems such as associative computing and optimization is challenging. Consider the graph coloring optimization problem with a goal of finding a minimum set of colors such that any nodes in a graph that are connected by an edge do not share the same color. Vertex coloring has applicability to scheduling [16], resource allocation [17], etc. For example, consider the social network in Fig. 2a, where individuals are represented by nodes, and are connected by edges if they are related. By solving the graph coloring problem, we can identify unrelated individuals.

Figure 2: (a) social network; colors can suggest unrelated individuals; (b) capacitively coupled oscillators.

For combinatorial optimization, the optimal value of a function must be computed within a domain set that is discrete or combinatorial; graph coloring is an NP-hard problem, and an algorithm may search the entire domain set. This often necessitates a heuristic approach, which does not guarantee an optimal solution. Interestingly, systems of coupled oscillators can be used to perform a vertex coloring of random graphs in a fast and energy efficient manner [18]. Experimentally, the dynamics of relaxation oscillators can be used to find solutions to the vertex coloring problem for unweighted and undirected graphs [19]. When oscillators are capacitively coupled such that they are topologically equivalent to an input graph (Fig. 2b), their steady-state phases will approximate a solution to the graph coloring problem. Hardware kernels based on coupled oscillators are being studied via research at ND [20, 21]. Interestingly, similar technologies can also be used to implement spiking neural networks [22] (related to Thread 01).

Example Projects:

- Project 1: Participants will explore how hashing functions and the hardware kernels that support them can improve the performance, energy efficiency, and or capabilities of machine learning models that are augmented with external memory and/or other machine learning models that are amenable to hashing functions. Application-level targets include image classification and language processing tasks.

- Project 2: Teachers will develop one-shot/few-shot learning models (i.e., where a neural network can learn how to complete a task, perform a new classification, etc. with just a few training examples) that are applicable to robotics problems – and that can be realized via fast/energy efficient hardware given the frequent constraints of this application space is of great interest (e.g., battery life).

- Project 3: ND personnel are investigating new hardware kernels that can perform MAC operations for a neural network in the analog domain. Participants will explore the impact of analog hardware on emerging machine models. Standard current-voltage models may be used. This may come in the form of circuit-level simulations. For example, one challenge to this approach is how device-to-device variations will impact application-level accuracy.

- Project 4: Application-level case studies may stem from ND faculty member Kevin Bowyer’s research among others. As a representative example, Bowyer (a leader in the field of biometrics) regularly employs ML algorithms in his research on iris biometrics – as scans of an individual’s iris may represent a more robust mechanism for identifying a given individual (at passport control, as a password, etc.) However, challenges must be overcome in the context of spoofing attacks (e.g., where an individual may wear contact lenses to mask a recorded iris signature). Labeled attack data is limited and is constantly changing, making it challenging to develop a ML model that can refute an attack. Developing efficient and accurate ML models with limited datasets – inspired by human learning models [23] – is thus of great interest.

- Project 5: Teachers will conduct research with the Bowman Creek Educational Ecosystem (BCe2) project, in the area of remote sensing and Internet of Things (IoT) technologies. One of the challenges in the neighborhood is flooding and storm water runoff around Bowman Creek, sections of which were channelized into underground pipes that literally run under the Riley HS football field. Teachers will work to develop Arduino-based systems for collecting data from the creek and wirelessly uploading for analysis. In particularly, they will consider approaches for measuring the effectiveness of "rain gardens" using local plants with deep roots systems as a means for collecting storm water runoff. Technologies included the use of soil moisture sensing and the development of novel approaches for measuring water inflow and outflow from the gardens that was not absorbed. Machine learning models can be applied in this work.

- Project 6: The objective of this project is to automatically generate English descriptions of software source code. For example, to create comments for source code that does not already have comments in it. The descriptions have two uses: one, to include with software to help programmers understand code, and two, as tools to help visually impaired programmers and students navigate code more easily. The strategy that the project will follow is: (1) perform empirical study to determine the process that human programmers use to write descriptions, (2) design algorithms that mimic a portion of that process, and (3) evaluate the effectiveness of those algorithms.

- Project 7: New information is being produced and curated at an increasing rate, and as a result, economies and industries will depend heavily on effective and accurate storage and retrieval of information during the decision-making process and during opinion formation. Teachers will work closely with faculty and graduate students to learn the how search engines like Google, Bing and Yahoo perform keyword searches, and how they are weaving information provenance and fact checking into their query results. To that end, they will improve their understanding on how terms and documents are represented and compared digitally, how massive storage systems quickly retrieve results, and how everyday users naturally parse and refine complex questions into a handful of key words, and how deep neural networks and other machine learning tools are being used to determine the trustworthiness of the information.

- Project 8: Teacher will study the collective dynamics of physical devices, and investigate non-Boolean information processing architectures that fundamentally embrace the “let the physics do the computing” paradigm. Participants will explore the fundamental limits of computational complexity that are associated with such devices, and consider how practical issues such as oscillation frequency, synchronization latency, inherent device-to-device variability, etc. can impact an application-level task. Note that such systems might ultimately be capable of providing solutions to problems that are deemed to be “computationally hard” – i.e., they cannot be solved in polynomial time (where time is a polynomial function of input size), and typically require exponential time. Examples of such problems include assigning colors to nodes of a graph such that two connected nodes do not have the same color. (This problem has direct applicability to various resource allocation problems – e.g., airline scheduling, etc.) While there is obvious applicability to physics-based courses, there are also direct linkages to various math courses. As an example, one might have students determine what polynomial function, exponential function, etc. describes the complexity of a problem, and then use these expressions to compare run times, etc.

- Project 9: The recent push towards harnessing the vast amounts of data available for smart cities also includes smart control of water resources. An important means of method of managing water resources includes the use of green spaces to manage rain water run-off. However, managing these green spaces is taxing and imposes a large cost on cities. Automating the monitoring and management of such spaces can lower the cost of adopting such approaches. As part of this automation, researchers at ND are developing means of harvesting energy from the green spaces to power lightweight sensors and wireless communication systems. As a key first step, participants will develop an energy harvesting system to extract power from existing trees in the South Bend area. This will be interfaced with low-power communication and computation systems. This project would be amenable to activities and classes associated with introductory electronics and physics.

-

Project 10: Using coupled oscillators to enable parallel discrete optimization is an emerging paradigm that holds immense promise. Similar principles also lead to the synchronization between fireflies or synchronization between human finger tapping. We’d like to see if we can take similar principles to firefly synchronization and create optical systems based on LDRs and LEDs to develop bracelets that might flash synchronously based on proximity. We will then analyze some properties of graphs based on such oscillatory systems.

Example Projects:

-

V. Sze, Y.-H. Chen, T.-J. Yang, and J. S. Emer, "Efficient processing of deep neural networks: A tutorial and survey," Proceedings of the IEEE, vol. 105, no. 12, pp. 2295-2329, 2017

-

R. Collobert and J. Weston, "A unified architecture for natural language processing: Deep neural networks with multitask learning," in Proceedings of the 25th international conference on Machine learning, 2008, pp. 160-167: ACM

-

H. Greenspan, B. Van Ginneken, and R. M. Summers, "Guest editorial deep learning in medical imaging: Overview and future promise of an exciting new technique," IEEE Transactions on Medical Imaging, vol. 35, no. 5, pp. 1153-1159, 2016

-

F. Chollet. (2018, September 12). Keras: The Python Deep Learning Library. Available: https://keras.io/

-

S. Salahuddin. (2017, September 12). Salahuddin NCFET Consortium. Available: http://www.techdesignforums.com/blog/2016/04/07/intel-tsmc-globalfoundries-post-finfet-chenming-hu-uc-berkeley/

-

M. Trentzsch et al., "A 28nm HKMG super low power embedded NVM technology based on ferroelectric FETs," in Electron Devices Meeting (IEDM), 2016 IEEE International, 2016, pp. 11.5. 1-11.5. 4: IEEE

-

H.-S. P. Wong et al., "Metal–oxide RRAM," Proceedings of the IEEE, vol. 100, no. 6, pp. 1951-1970, 2012

-

S. Yuasa et al., "Future prospects of MRAM technologies," in Electron Devices Meeting (IEDM), 2013 IEEE International, 2013, pp. 3.1. 1-3.1. 4: IEEE

-

P. Chi et al., "Prime: A novel processing-in-memory architecture for neural network computation in reram-based main memory," in ACM SIGARCH Computer Architecture News, 2016, vol. 44, no. 3, pp. 27-39: IEEE Press

-

D. Ielmini and H.-S. P. Wong, "In-memory computing with resistive switching devices," Nature Electronics, vol. 1, no. 6, p. 333, 2018

-

N. Talati, S. Gupta, P. Mane, and S. Kvatinsky, "Logic design within memristive memories using memristor-aided loGIC (MAGIC)," IEEE Transactions on Nanotechnology, vol. 15, no. 4, pp. 635-650, 2016

-

S. Jain, A. Ranjan, K. Roy, and A. Raghunathan, "Computing in memory with spin-transfer torque magnetic ram," IEEE Transactions on Very Large Scale Integration (VLSI) Systems, vol. 26, no. 3, pp. 470-483, 2018

-

M. Jerry et al., "Ferroelectric FET analog synapse for acceleration of deep neural network training," in Electron Devices Meeting (IEDM), 2017 IEEE International, 2017, pp. 6.2. 1-6.2. 4: IEEE

-

D. Reis, M. Niemier, and X. S. Hu, "Computing in memory with FeFETs," presented at the Proceedings of the International Symposium on Low Power Electronics and Design, Seattle, WA, USA, 2018

-

S. Han et al., "EIE: Efficient Inference Engine on Compressed Deep Neural Network," in 2016 ACM/IEEE 43rd Annual International Symposium on Computer Architecture (ISCA), 2016, pp. 243-254

-

F. T. Leighton, "A graph coloring algorithm for large scheduling problems," 1979

-

T.-K. Woo, S. Y. Su, and R. Newman-Wolfe, "Resource allocation in a dynamically partitionable bus network using a graph coloring algorithm," IEEE Transactions on Communications, vol. 39, no. 12, pp. 1794-1801, 1991

-

A. Parihar, N. Shukla, M. Jerry, S. Datta, and A. Raychowdhury, "Computational paradigms using oscillatory networks based on state-transition devices," in Neural Networks (IJCNN), 2017 International Joint Conference on, 2017, pp. 3415-3422: IEEE

-

A. Parihar, N. Shukla, M. Jerry, S. Datta, and A. Raychowdhury, "Vertex coloring of graphs via phase dynamics of coupled oscillatory networks," Scientific reports, vol. 7, no. 1, p. 911, 2017

-

Semiconductor Research Corporation. (2018, September 12). Energy Efficient Computing: from Devices to Architecture. Available: https://www.src.org/program/nri/e2cda-nri/

-

University of Notre Dame College of Engineering. (2018, September 12). Extremely Enegy Efficient Collectice Electronics. Available: https://collectivecomputing.nd.edu/

-

M. Jerry, A. Parihar, B. Grisafe, A. Raychowdhury, and S. Datta, "Ultra-low power probabilistic IMT neurons for stochastic sampling machines," in VLSI Technology, 2017 Symposium on, 2017, pp. T186-T187: IEEE

-

O. Vinyals, C. Blundell, T. Lillicrap, and D. Wierstra, "Matching networks for one shot learning," in Advances in Neural Information Processing Systems, 2016, pp. 3630-3638